Large language model agents now execute long, tool-using tasks where final outcome checks can arrive too late for intervention. PrefixGuard is a trace-to-monitor framework with an offline StepView induction step followed by supervised monitor training. StepView induces deterministic typed-step adapters from raw trace samples, and the monitor learns an event abstraction and prefix-risk scorer from terminal outcomes. Across WebArena, τ2-Bench, SkillsBench, and TerminalBench, the strongest PrefixGuard monitors reach 0.900/0.710/0.533/0.557 AUPRC. They improve over raw-text controls by an average of +0.137 AUPRC when comparing the strongest backend in each representation, while LLM judges remain substantially weaker under the same prefix-warning protocol.

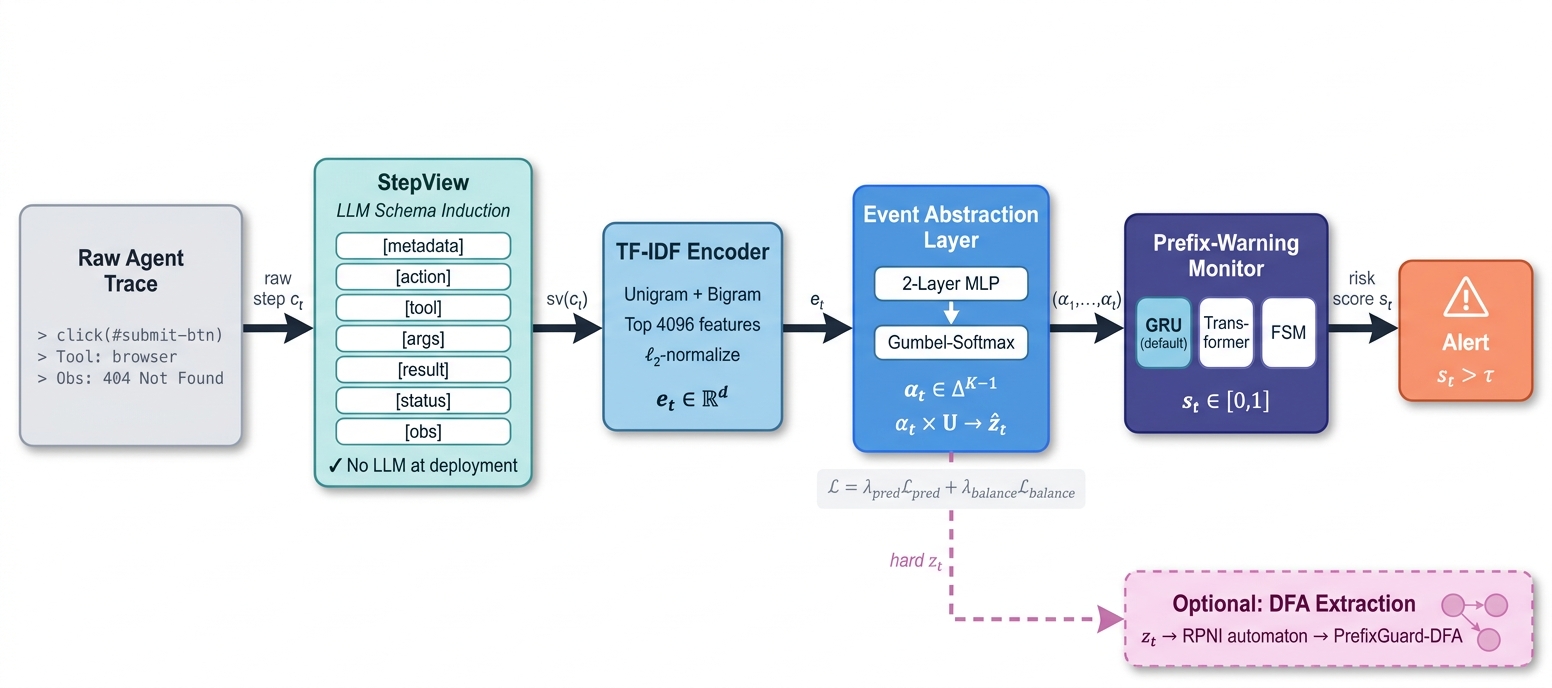

PrefixGuard converts heterogeneous raw traces into typed StepView records, encodes each step with a frozen TF-IDF encoder, learns a discrete event abstraction with Gumbel-softmax, and scores risk online with a GRU, Transformer, or finite-state backend. Hard learned symbols can also be compiled into PrefixGuard-DFA for finite-state audit.

LLM-assisted offline adapter induction maps raw browser, dialogue, coding, and CLI traces into fixed typed fields.

A differentiable event abstraction layer learns a failure-aligned symbol alphabet from the prefix warning objective.

The monitor produces a causal risk score after each new step, with no LLM inference required at deployment.

| Input view | Head / scorer | WebArena | τ2-Bench | SkillsBench | TerminalBench |

|---|---|---|---|---|---|

| Prompt | GPT-5.4-mini | 0.407 | 0.302 | 0.101 | 0.127 |

| Prompt | DeepSeek-V4-Pro | 0.450 | 0.396 | 0.080 | 0.107 |

| StepView activity | PPM LSTM | 0.382 ± 0.004 | 0.231 ± 0.003 | 0.089 ± 0.001 | 0.093 ± 0.000 |

| Raw text | GRU | 0.871 ± 0.004 | 0.554 ± 0.006 | 0.315 ± 0.006 | 0.370 ± 0.001 |

| StepView | PrefixGuard-DFA | 0.792 ± 0.015 | 0.316 ± 0.055 | 0.190 ± 0.021 | 0.184 ± 0.029 |

| StepView | PrefixGuard-Transformer | 0.892 ± 0.006 | 0.710 ± 0.014 | 0.478 ± 0.028 | 0.555 ± 0.006 |

| StepView | PrefixGuard-GRU | 0.900 ± 0.015 | 0.696 ± 0.004 | 0.533 ± 0.020 | 0.557 ± 0.005 |

| PG-GRU gain vs. Raw-text GRU | +0.029 | +0.142 | +0.218 | +0.187 | |

Values are AUPRC. Multi-seed rows report mean ± standard deviation over 3 seeds. Prompt baselines use zero-shot full-prefix prompts with N=200 samples.

Changing only the representation from raw text to StepView improves PrefixGuard-GRU by +0.029 to +0.218 AUPRC across the four benchmark families.

GRU and Transformer backends give the best ranking scores. DFA extraction remains useful as an audit artifact, especially when the learned symbolic state space stays compact.

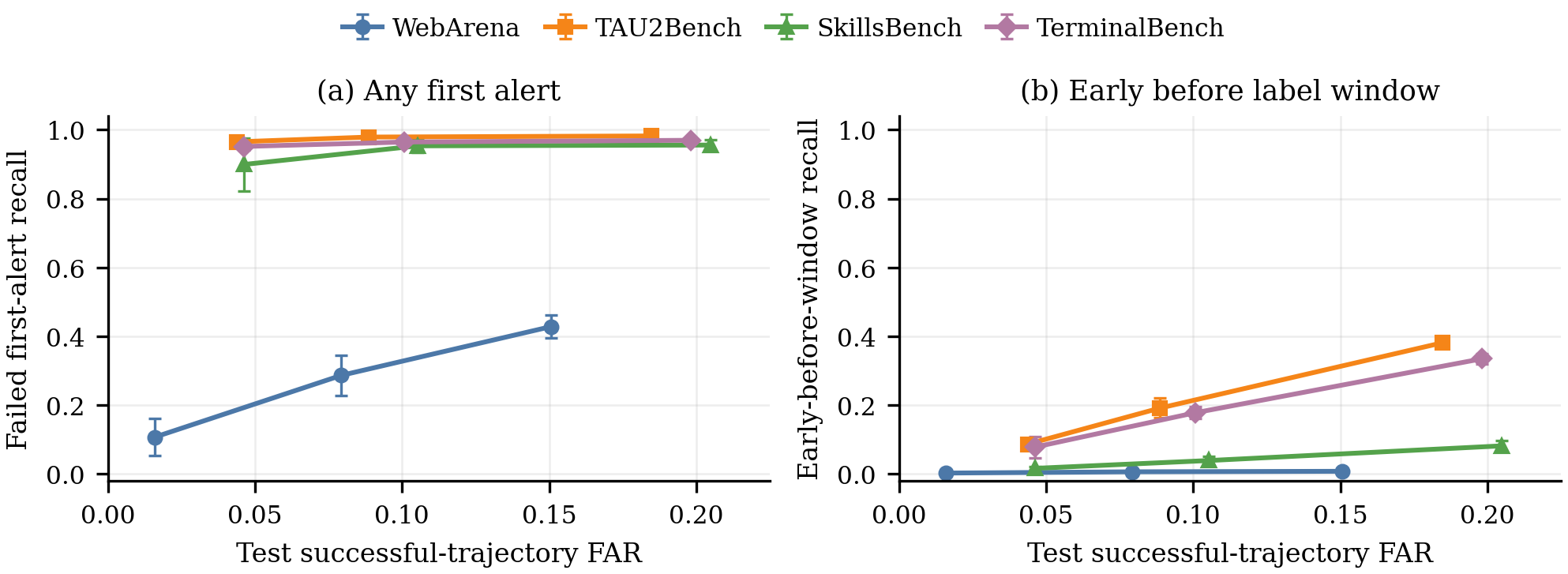

WebArena reaches high AUPRC but gives only 0.007 early failed-trajectory recall at 10% FAR. τ2-Bench and TerminalBench retain more actionable early alerts under the same diagnostic.

Zero-shot LLM prompt baselines are substantially weaker under the matched prefix-warning protocol, motivating lightweight learned monitors instead of repeated deployment-time LLM judging.

A high AUPRC is useful only when the monitor can raise alerts early enough and under a realistic false-alarm budget. The diagnostics below read the same PrefixGuard results through this deployment lens.

| Benchmark | FAR | Failed recall | Early recall | Lead fraction |

|---|---|---|---|---|

| WebArena | 0.079 | 0.287 | 0.007 | 0.026 |

| τ2-Bench | 0.089 | 0.979 | 0.192 | 0.106 |

| SkillsBench | 0.105 | 0.954 | 0.039 | 0.017 |

| TerminalBench | 0.101 | 0.965 | 0.178 | 0.215 |

The operating-point table shows deployable alert behavior; the observability ceiling explains when the trace itself limits recoverable warning signal; and the DFA audit shows how a finite-state monitor can be inspected after training.

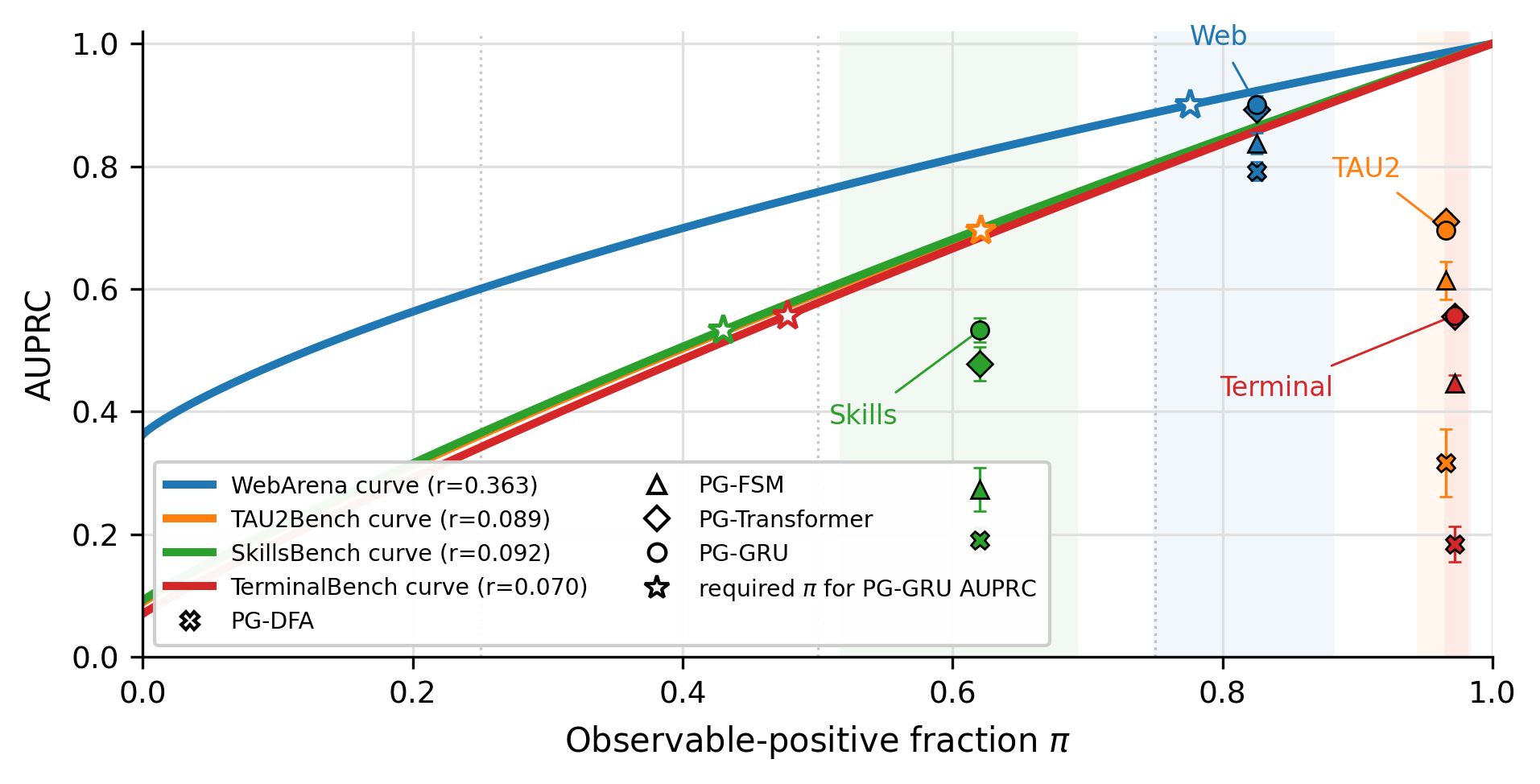

A monitor can only warn from evidence that has already appeared in the prefix. The conditional AUPRC ceiling estimates this upper bound by grouping prefixes with comparable visible evidence and asking how separable future failures remain within those groups. When the ceiling is high, missed warnings usually point to modeling or representation limitations. When the ceiling is low, the trace itself is not yet exposing enough information for any causal monitor to separate success from failure reliably.

Here \(r\) is the positive-prefix rate and \(\pi\) is the fraction of positive warning prefixes whose failure evidence is already observable in the current representation. The endpoints are \(\mathcal{A}(0,r)=r\) and \(\mathcal{A}(1,r)=1\).

This explains why ranked AUPRC and deployable alert utility can diverge. WebArena has strong ranking performance, but many failures become distinguishable only late, so a strict 10% false-alarm budget leaves little room for early intervention. In contrast, τ2-Bench and TerminalBench expose more actionable prefix cues, which is why the first-alert diagnostics retain higher failed-trajectory recall under the same low-FAR setting.

PrefixGuard-DFA is not intended to be the strongest scorer. Its role is to make the learned event abstraction inspectable. Hard event symbols are replayed through a finite-state monitor, then each state can be audited by its empirical risk, representative prefixes, outgoing transitions, and whether it corresponds to a trusted phase or a warning phase.

The audit surfaces concrete behavioral phases rather than opaque per-prefix scores: browser reset and click-loop states in WebArena, lookup fan-out and policy handoff in τ2-Bench, dependency repair and output verification in SkillsBench, and tool-call failure or late-stage repair in TerminalBench.

The WebArena DFA has 29 states; 27 trusted states were coded from exemplar StepView prefixes and 2 low-support states were excluded. Warning states are listed first, followed by representative normal states.

| State | Behavioral phase | Risk | Eval | \(\bar{t}/T\) | Representative step |

|---|---|---|---|---|---|

| Warning states (risk ≥ 0.34) | |||||

| q0 | Early navigation reset | 0.857 | 544 | 0.25 | click; goto homepage |

| q28 | Explicit error message | 0.548 | 40 | 0.81 | type [out of stock...] |

| q22 | Repetitive click loop | 0.518 | 595 | 0.40 | click×6 (no type) |

| q12 | Misaligned search query | 0.510 | 643 | 0.25 | type [CMU / restaurants near CMU] |

| q24 | External-search redirect | 0.434 | 276 | 0.40 | new_tab; goto google.com; type |

| q1 | Early scroll-and-click | 0.342 | 379 | 0.25 | click; scroll [down] |

| Representative normal states (risk < 0.25) | |||||

| q17 | Productive backtracking | 0.085 | 56 | 0.83 | go_back×5 |

| q4 | Credential entry | 0.099 | 139 | 0.67 | type [username]; click |

| q26 | Task-specific search | 0.038 | 90 | 0.50 | type [color utility]; click |

| q8 | Long-form text entry | 0.122 | 80 | 0.74 | type [multi-sentence message] |

| q7 | Short-label selection | 0.119 | 111 | 0.75 | type [feature]; click |

The alignment is a single-coder diagnostic for one WebArena DFA seed. It is evidence that high-risk states can be behaviorally named, not a multi-coder interpretability study.

@article{huang2026prefixguard,

title={PrefixGuard: From LLM-Agent Traces to Online Failure-Warning Monitors},

author={Huang, Xinmiao and Hu, Jinwei and Roy, Rajarshi and Wu, Changshun and Dong, Yi and Huang, Xiaowei},

journal={arXiv preprint arXiv:2605.06455},

year={2026},

doi={10.48550/arXiv.2605.06455},

url={https://arxiv.org/abs/2605.06455}

}